Poster | 6th Internet World Congress for Biomedical Sciences |

Marcelino Martinez_Sober(1), EMILIO SORIA OLIVAS(2), Antonio J. Serrano López(3), Alfredo Rosado(4), José David Martín Guerrero(5)

(1)Universidad de Valencia - Burjassot. Spain

(2)DPTO INGENIERÍA ELECTRONICA. FACULTAD DE FISICAS - BURJASSOT/VALENCIA. Spain

(3)Dpto. Electrónica. Universidad de Valencia - Burjasot. Spain

(4)Departamento de Electrónica. Universidad de Valencia - Burjassot. Spain

(5)G.P.D.S. Departament d´Enginyeria Electrònica. Universitat de València - Burjassot. Spain

|

|

|

|

|

|

|

[New Technology] |

[Cardiolovascular Diseases] |

[Obstetrics & Gynecology] |

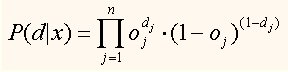

To derive the proposed algorithm, we follow a procedure analogous to the one described by Rumelhart (2). According to his approach, the non-linear function applied to the output of the filter is conditioned by the application to binary classification given to the system. Rumelhart finds an expression for the probability of getting a set of outputs, d, from the different inputs, x:

[1]

[1]

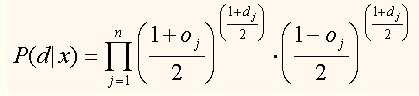

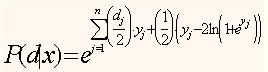

If we consider the logarithm of the previous expression the cross entropy between the output of the system and the desired signal is obtained (2). The output and desired signals used by Rumelhart have a range from 0 to 1, as in the proposed system, these signals vary from -1 to +1, a change in the variables must be performed. Considering the new variables we obtain a new expression for the conditioned probability given by

[2]

[2]

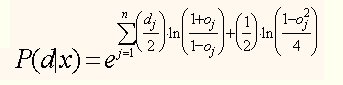

that, after some processing, leads to:

[3]

[3]

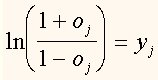

and, by defining:

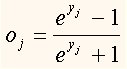

[4]

[4]

where yj is the output of the adaptive filter (yj=W(j)×xt(j) ), so:

[5]

[5]

we have, thus, derived a procedure to calculate Oj. By replacing this result in expression [3]

we get to:

[6]

[6]

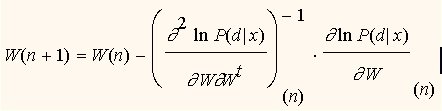

Our aim is to maximise the natural logarithm of the previous function (maximum likelihood) with respect to the filter coefficients W(j), in order to obtain the maximum probability for obtaining the outputs, d, from the inputs, x. An iterative method to solve this problem is the Newton-Raphson (3):

[7]

[7]

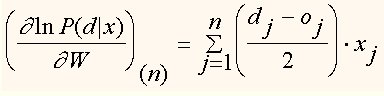

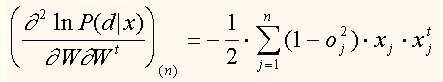

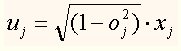

By calculating the forementioned derivatives we obtain:

[8]

[8]

[9]

[9]

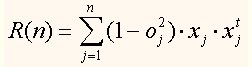

If we define a new variable:

[10]

[10]

[11]

[11]

then, the second derivative can be recursively obtained, as:

![]() [12]

[12]

and we can apply the matrix inversion lemma (4) which is the basis of recursive algorithms.

This procedure presents a major drawback when we approach the optimum system as the gradient term does not vanish because it considers all contributions from the beginning of the iterations and that involves a continuous adaptation of the weights. The easiest solution is to consider only the last term of the summation that yields the expression of the gradient.

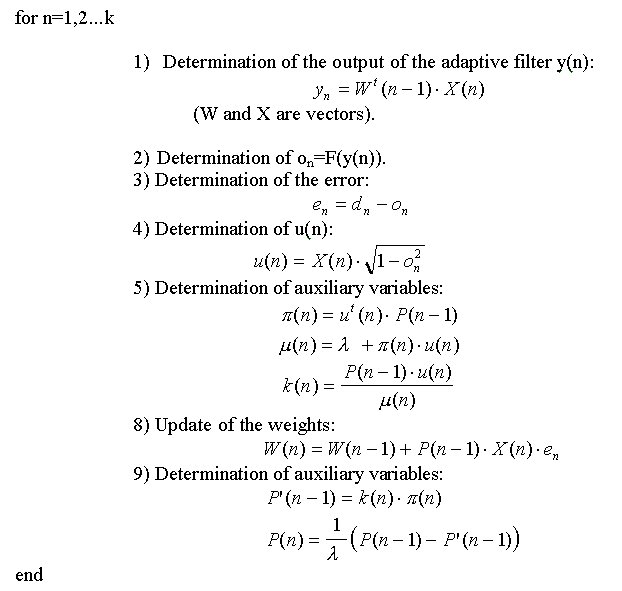

The obtained algorithm is like it continues:

Initialization of the variables, P(0),W(0), with P(0) similar to d-1×I (being I the main identity and d a very small value).

|

|

|

|

|

|

|

[New Technology] |

[Cardiolovascular Diseases] |

[Obstetrics & Gynecology] |